This is the final part of a series about our “VLE Reimagined” project. For the background and more ideas, see parts 1 and 2.

This time we share two ideas with the common theme that they both employ AI.

It’s worth reiterating that in creating and exploring novel ideas in this project, our focus was on the creative journey rather than the destination. That’s particularly relevant with these two – our position is not to present these as “good”.

Instead they provide a safe way to envision different possibilities and reflect on the difficult questions that they ask.

What if AI helped with my assignment… but not by giving me the answer?

AI Copilot challenges the general conversation around AI as a blanket “provide the answer” tool.

The idea is to instead use the power of Large language models (LLMs) a much more targeted remit: to apply changes to existing material, specifically, assignment briefs.

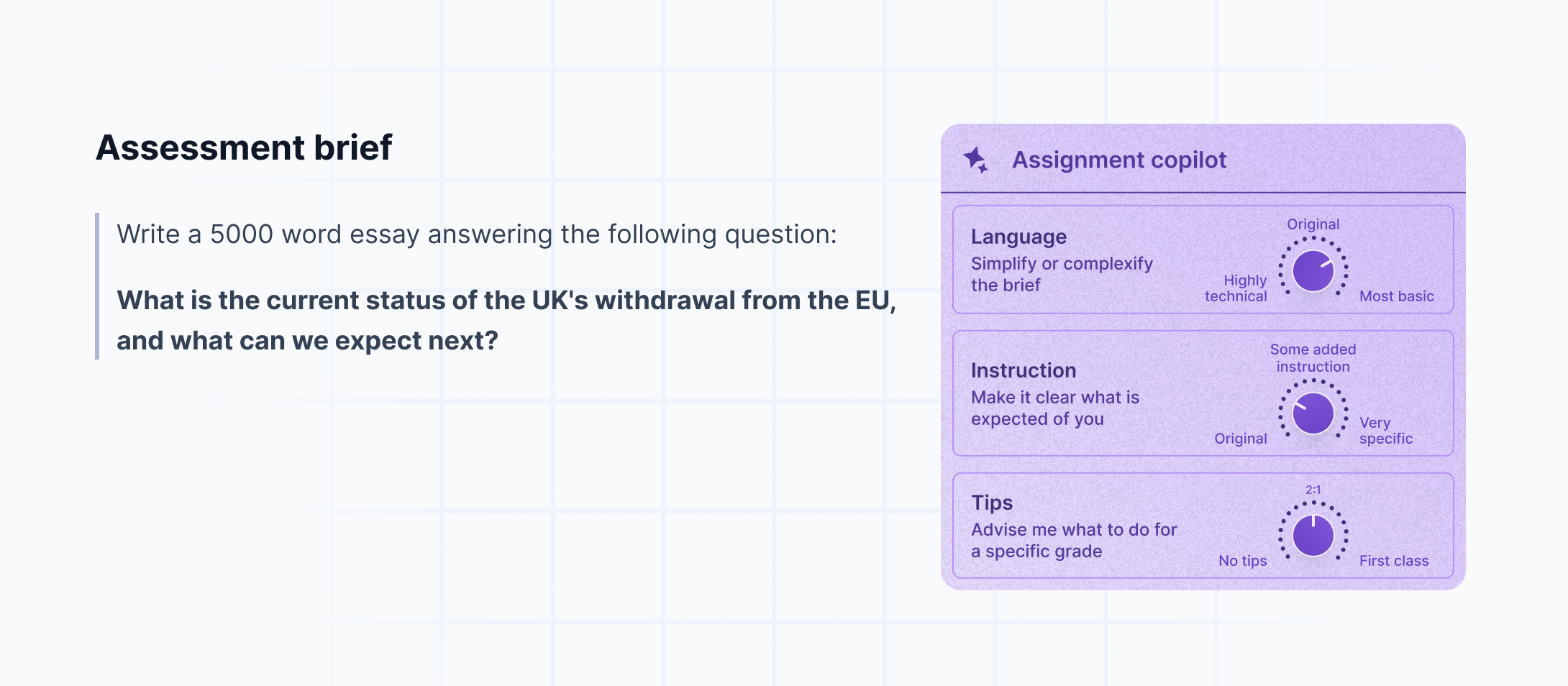

The three features we explored are fairly small in scope:

- Tweak the complexity of the language, making it simpler or perhaps more technical

- Make the instructions clearer

- Incorporate tips for how to succeed in the assignment

Our interest here lies less in the technicalities of how this might be delivered and more in the questions it raises. Questions like:

- Should a teacher be informed if this feature was used?

- If so, why? and would the answer be the same if peers were supporting each other to interpret assignment briefs?

- Are students using large language models in this way already? Beyond ‘essay generation’, in what ways are students using AI to assist their approaches to assessment?

- Should it impact grades? Again, if so – why? And in what way?

What if the AI detection tables were turned?

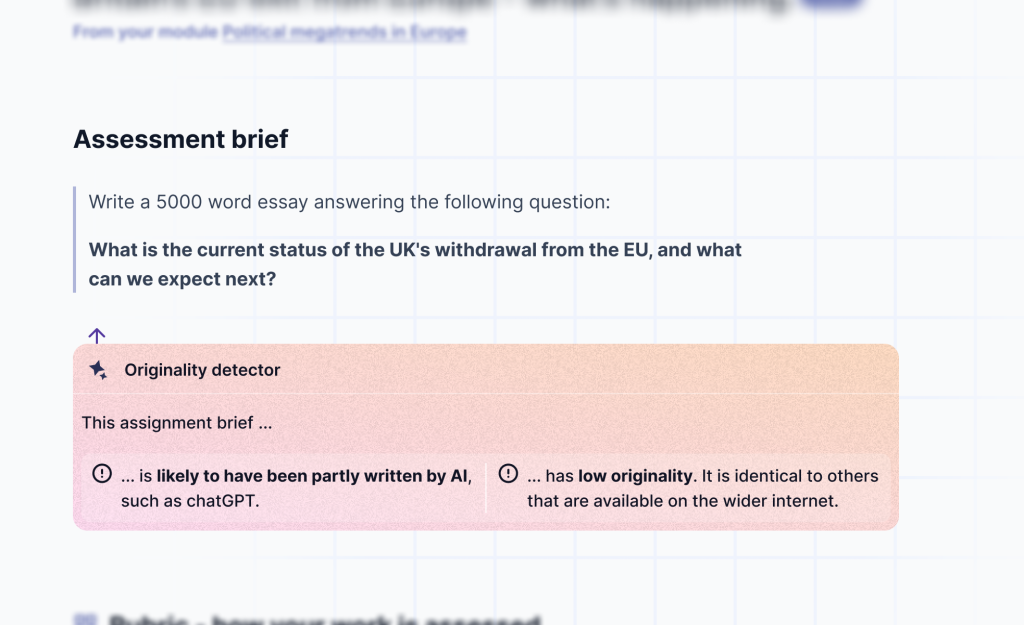

We observed a great deal of discourse around AI detection centres on analysing student work. If we take a broad definition of academic integrity – “Academic Integrity means being honest in your academic work” (source) – we can infer that it applies to academic staff too. Indeed, speaking to students Jisc’s National Centre for AI found that students expressed concerns with the use of AI to generate assessments.

So we asked a very simple question which led to quite a stark concept – what if students were similarly equipped to lecturers?

In this mockup, we see an originality detector providing its analysis of an assignment brief.

We thought this was a particularly spicy idea to mock up, and in doing so it helps raise a good mix of practical and ideological questions:

- Should students be equipped in this way?

- What would happen if they were?

- Technically learners might be able to run these kinds of checks anyway using third party tools. Is this a concern?

- If some content is “detected” as being AI generated, how might learners respond to that and would the response be a fair or desired one?

There is a difference between something being available, and having that apparatus baked into the core experience of a VLE. Beyond just availability, it can create an expected workflow (e.g. “read the content → check the detector results → adjust how I perceive the content”).

Where next?

As we discussed in part 1, this project was primarily about skills development – practicing ideation, divergent thinking, and our skills in analysing products and critiquing ideas. We don’t have plans to make the ideas we explored.

It was through creating these ideas and sharing them at digifest 2023 that we saw the value in publishing them here.

You can read more on what we are doing around AI in education over at the National Centre for AI’s blog.

What do you think?

If you are interested in this project, have ideas or reactions you’d like to discuss with us, you can reach us at innovation@jisc.ac.uk. We’re geared up to hear your thoughts. And if this has sparked some specific thoughts about your VLE, you can also

Are there other pieces of the edtech furniture that you’d like to explore new ideas for?