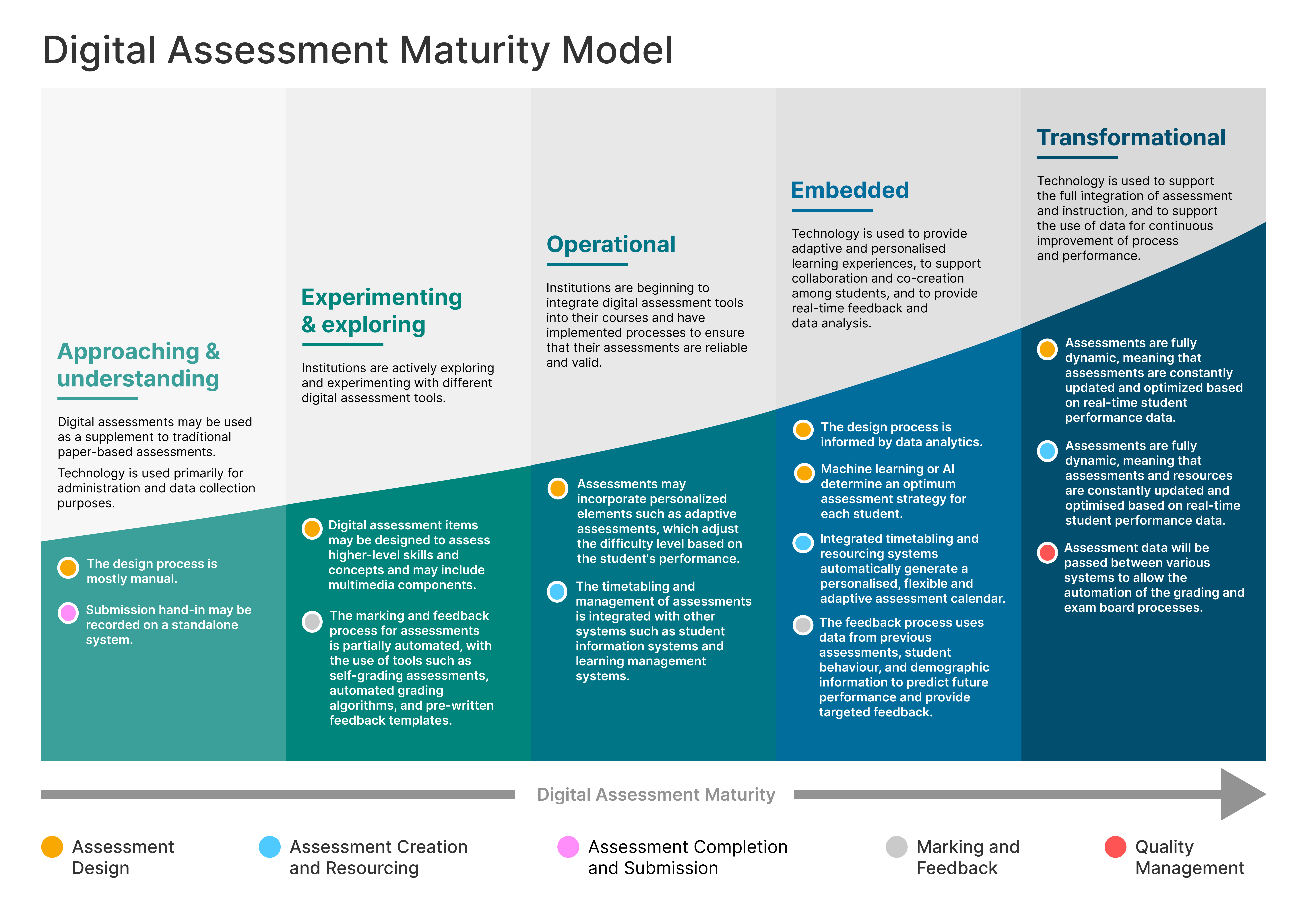

As part of our work on a digital assessment maturity model, this post contains a more in-depth look at the quality management phase.

Quality management of assessment

When approaching and understanding digital assessment, student assessment data may be stored in ad-hoc, basic tools, such as Microsoft Excel. Sharing data may only take place via email or on-premises network storage. Exam boards may be paper-based, in-person meetings, with manual processes to support them.

You will see the inclusion of digital assessment into the process and management of assessments at the experimenting and exploring stage. Rubrics and standards may be evident, but there may be limited use of technology to support quality control.

At an operational stage, robust quality measures are evident, including peer review or testing. There are likely to be specific software packages through which to record, distribute and analyse academic outcomes. Assessment data will be passed between relevant systems to allow the automation of grade return, feedback collation and management, and exam board processes. Where not explicitly designed out, the use of plagiarism and academic integrity tools may be used.

The embedded stage involves more sophisticated quality control measures, such as digital processes to support external validation, sampling, or accreditation with disciplinary bodies. Assessment data and validated results will be synchronised across multiple systems, and may be used to provide insight into student performance and predictive analytics.

Where digital assessment is transformational, the advanced use of data analytics and machine learning to monitor and improve assessment quality. Assessment data management from a variety of sources will be fully integrated and seamless.

One reply on “Digital assessment maturity model: quality management”

[…] Quality management v0.1 blog […]